Juno RAG Knowledge Base Platform

An advanced Retrieval-Augmented Generation (RAG) platform with hybrid search, intelligent re-ranking, and multi-tenant support for building custom knowledge bases from documents and web content.

PROJECT OVERVIEW

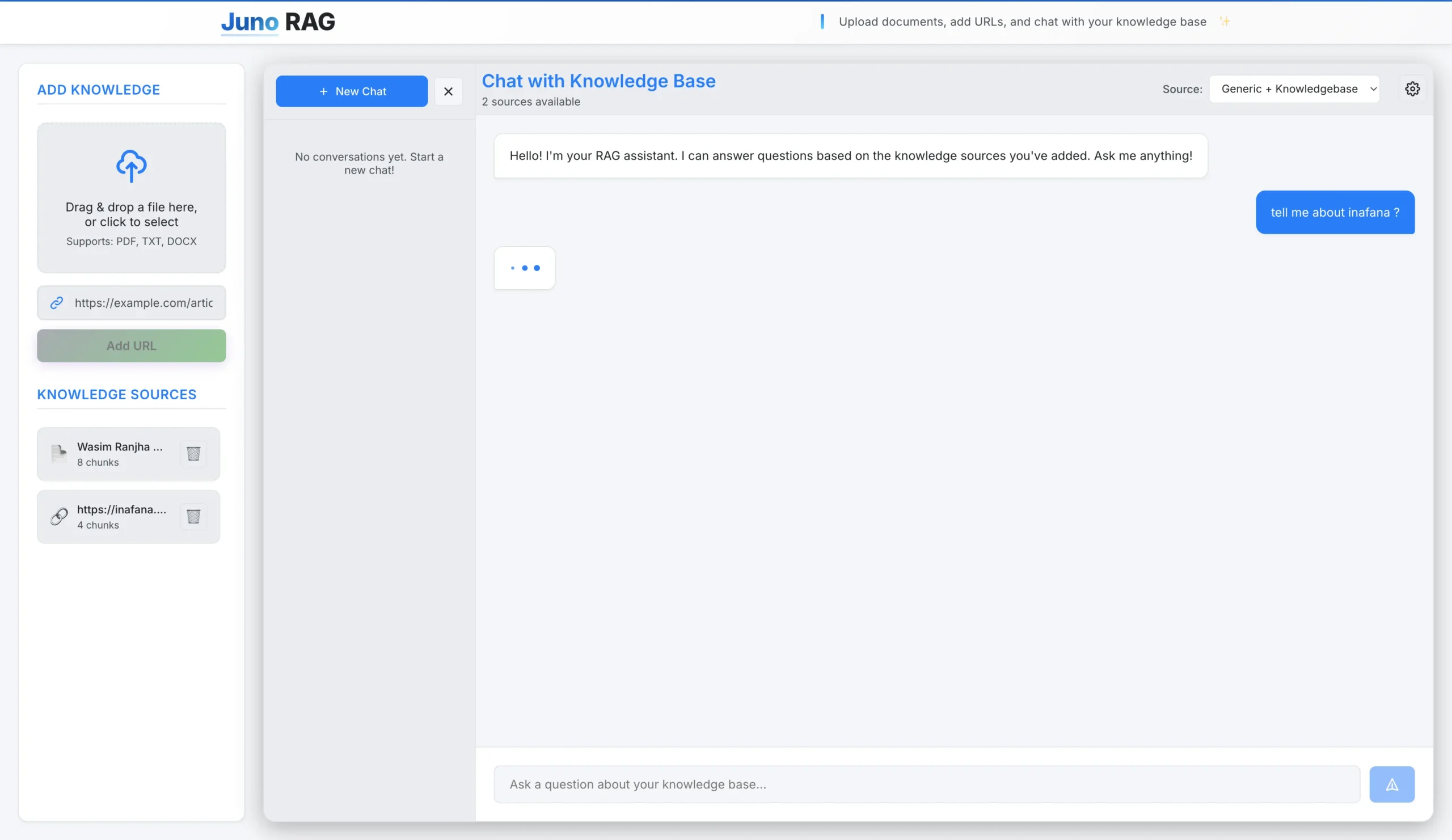

Juno RAG is a production-ready Retrieval-Augmented Generation platform that enables users to build intelligent knowledge bases from their own documents and web content. The system combines cutting-edge AI technologies with a modern, user-friendly interface to deliver accurate, context-aware responses based on custom knowledge sources.

KEY FEATURES

- Multi-Format Document Processing - Supports PDF, TXT, and DOCX files with advanced text extraction including OCR capabilities for scanned documents

- Web Content Integration - Extract and index content from web URLs, making web articles and documentation searchable

- Hybrid Search Technology - Combines semantic search (understanding meaning) with keyword search (BM25) for comprehensive document retrieval

- Intelligent Re-Ranking - Uses cross-encoder models to re-evaluate and re-order search results for maximum relevance

- Conversation Memory - Maintains conversation history and context across multiple interactions for more natural dialogue

- Multi-Tenant Architecture - Secure session-based user isolation ensuring data privacy and security

- Source Citation - Every response includes references to the source documents used, ensuring transparency and verifiability

- Confidence Scoring - Provides confidence metrics for each response, helping users understand answer reliability

The platform features a modern React frontend with a beautiful dark-themed UI, drag-and-drop file uploads, and a real-time chat interface. The FastAPI backend is optimized for production use with advanced prompt engineering, query expansion, and multi-provider LLM support (OpenAI, Anthropic, Groq).

TECHNICAL ARCHITECTURE

The system uses ChromaDB as a persistent vector database, storing document embeddings locally for fast semantic search. Documents are intelligently chunked with overlap to maintain context, and each chunk is converted into high-dimensional vectors using SentenceTransformers models. When users ask questions, the system performs hybrid search to find relevant chunks, re-ranks them for accuracy, and sends the top results to the LLM for context-grounded answer generation.

Advanced features include query analysis and expansion for complex questions, source pre-filtering to eliminate irrelevant content, and support for multiple knowledge source modes (knowledgebase-only or generic+knowledgebase). The platform is designed with security in mind, using backend-controlled session management and user isolation at the database level.

TECHNICAL ARCHITECTURE

ActionFlow leverages a modern tech stack with React and Tailwind CSS for the frontend, Django REST Framework for the API layer, and Playwright for headless browser automation. The system uses JWT authentication for secure access and supports both SQLite for development and PostgreSQL for production deployments. The Chrome extension utilizes Manifest V3 for modern browser compatibility and Chrome Storage API for state management.

Users can create complex multi-step workflows by combining multiple recordings, configure scheduling with timezone awareness, and monitor execution through detailed activity logs. The platform's variable system allows for parameterized workflows that can be reused with different data sets, making it ideal for testing, data entry automation, and repetitive task management.

THE REQUIREMENT

The client needed a sophisticated RAG (Retrieval-Augmented Generation) system that could transform their document collections and web content into an intelligent, queryable knowledge base. The solution required the ability to answer questions accurately based solely on the provided documents, with full transparency about information sources.

PRIMARY REQUIREMENTS

- Document Processing - Support for multiple file formats (PDF, DOCX, TXT) with robust text extraction, including handling of scanned documents through OCR technology

- Web Content Integration - Ability to extract and index content from web URLs, making online documentation and articles searchable

- High-Accuracy Search - Implement advanced search capabilities that go beyond simple keyword matching to understand semantic meaning and context

- Source-Grounded Responses - Ensure all answers are based exclusively on the provided knowledge base, with no hallucination or generic responses unless explicitly allowed

- User Experience - Provide an intuitive, modern web interface for document management and conversational interaction

- Scalability & Security - Support multiple users with secure data isolation and session management

SPECIFIC TECHNICAL REQUIREMENTS

- Hybrid Search Implementation - Combine semantic vector search with keyword-based BM25 search to capture both meaning-based and exact-term matches

- Re-Ranking System - Implement cross-encoder re-ranking to improve search result relevance by evaluating query-document pairs together

- Intelligent Chunking - Split large documents into optimal-sized chunks with overlap to maintain context while improving search precision

- Conversation Management - Maintain conversation history and context to enable natural, multi-turn dialogues

- Multi-Provider LLM Support - Support multiple LLM providers (OpenAI, Anthropic, Groq) with fallback mechanisms for reliability

- Query Analysis & Expansion - Analyze query complexity and expand queries for better retrieval, especially for complex or multi-part questions

- Source Citation - Track and display which source documents were used to generate each response

- Confidence Scoring - Provide confidence metrics to help users assess answer reliability

- Production-Ready Architecture - Implement error handling, file size limits, validation, and proper API documentation

- Cross-Platform Compatibility - Ensure compatibility across different operating systems, with special attention to macOS-specific issues

PERFORMANCE REQUIREMENTS

The system needed to handle large documents efficiently, process queries quickly, and maintain good response times even with extensive knowledge bases. The architecture required lazy loading of models, optimized embedding generation, and efficient vector search operations.

SECURITY REQUIREMENTS

Implement secure multi-tenancy where each user's data is completely isolated. Use backend-controlled session management to prevent client-side manipulation of user identities. Ensure all file uploads are validated for type and size, with proper error handling for malicious or corrupted files.

CORE FEATURES

-

Multi-format document upload and processing (PDF, TXT, DOCX)

-

Web URL content extraction and indexing

-

Hybrid search combining semantic and keyword matching

-

Cross-encoder re-ranking for improved search accuracy

-

Conversation memory and context management

-

Multi-tenant architecture with secure user isolation

-

Source citation and transparency in responses

-

Confidence scoring for answer reliability

-

Query analysis and intelligent expansion

-

Real-time chat interface with modern UI

-

Drag-and-drop file upload functionality

-

Knowledge source management (list, delete sources)

-

Multi-provider LLM support (OpenAI, Anthropic, Groq)

-

Advanced prompt engineering with hidden reasoning